Over the past year, something has quietly redrawn the competitive map of AI. The world's largest cloud providers — Microsoft and AWS — now carry strategic partnerships with both OpenAI and Anthropic simultaneously. At first glance, it looks contradictory. To the analytically minded, it signals the opening of a new competitive era.

The logic becomes clear once you move past the model layer. The real contest isn't about who builds the most capable foundation model. It's about who controls the stateful runtime — the persistent environment where AI agents live, accumulate memory, hold authority, and act continuously across enterprise systems.

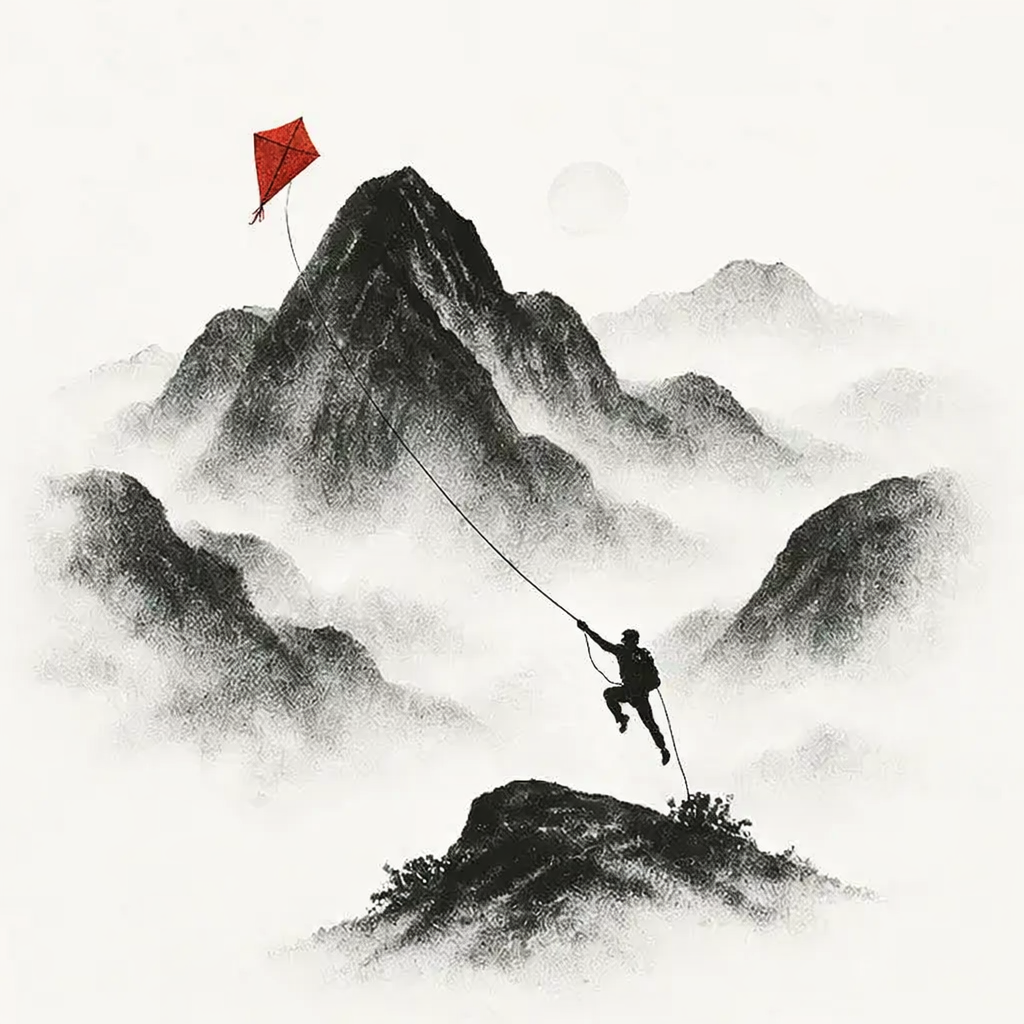

Think of the difference between a calculator and an employee with a desk, a calendar, and institutional memory. Stateless AI is the calculator. Stateful AI is the employee. The cloud providers are racing to own the office building.

— THE RUNTIME THESISThis is the strategic frontier that will define the next 1, 2, and 4 years — and the enterprise leaders who recognize it early will build architectures that compound. Those who don't will find themselves locked into someone else's substrate before they understood the stakes.

The Multi-Model Era Becomes the Default

In 2026, enterprises stop asking "Which model should we use?" and start asking "Which runtime should orchestrate all of them?" This is not a subtle semantic shift — it reflects a fundamental change in how AI capability is procured and deployed.

Three structural forces are converging to make this the inflection point:

MICROSOFT DEEPENS ITS ANTHROPIC INTEGRATION

Copilot begins routing complex, safety-critical tasks to Anthropic's reasoning models while preserving OpenAI for frontier generation tasks. The implicit message: no single model is sufficient for enterprise-grade reliability.

BEDROCK EMERGES AS AN AGENTIC OPERATING LAYER

AWS positions Bedrock not merely as model hosting, but as the stateful substrate for persistent agents — including an expanded runtime environment for OpenAI deployments. The bet is on becoming infrastructure-of-record for agentic workloads.

OPENAI GOES DELIBERATELY MULTI-CLOUD

A deliberate architectural separation emerges: stateless API access anchored to Microsoft Azure; stateful agent execution anchored to AWS. This is not a concession — it's a strategy to prevent single-provider dependency for OpenAI's own roadmap.

The practical implication for enterprise architects and MSPs is unambiguous: AI has become a multi-model, multi-cloud orchestration problem. Single-vendor AI strategies are not conservative — they are brittle. The architects who design for composability in 2026 will spend the next three years compounding that advantage.

Stateful Agents Reshape Enterprise Architecture

By 2027, the industry will have fully internalized a distinction that sounds technical but carries profound strategic weight: the difference between stateless AI and stateful AI.

STATELESS AI

The classic pattern: call a model, receive a response, discard context. High throughput, zero continuity. Appropriate for discrete tasks; insufficient for complex, longitudinal workflows.

STATEFUL AI

Long-running agents with persistent memory, managed identity, cross-system authority, and the ability to initiate — not merely respond. This is AI that operates like infrastructure, not a feature.

This distinction will reshape cloud procurement, vendor selection, and enterprise architecture in ways that mirror how containerization reshaped DevOps a decade prior. The platform positions crystallize:

Leads the stateful runtime race. Bedrock becomes the substrate for persistent agents that monitor, decide, and act across enterprise systems — with the durability guarantees enterprises require.

Doubles down on the control plane. Entra provides identity, Microsoft Graph provides context, Copilot Studio provides orchestration. The bet: whoever owns authorization owns agentic trust.

For enterprise leadership, the organizational implication is significant: AI shifts from "tool" to autonomous collaborator. Governance, auditability, and agent identity management stop being compliance footnotes and become core architectural requirements. The CISO and the CTO find themselves at the same table, negotiating the same decisions.

Cloud Providers Become AI Operating Systems

By 2029, the cloud landscape will be functionally unrecognizable compared to 2024. The term "cloud provider" will be an anachronism — the more accurate descriptor will be AI operating system.

The enterprise AI control plane.

Copilot becomes the universal interface. Microsoft Graph becomes the universal context engine. Entra identity becomes the universal trust fabric.

The competitive moat is not the model — it is the organizational graph that no external vendor can replicate.

The runtime layer for agentic compute.

Bedrock hosts persistent, memory-bearing agents that operate continuously across enterprise systems.

State becomes the new form of lock-in — and the new source of durable enterprise value. The organization that moves its agent state to AWS makes a long-term infrastructure commitment.

Models become modular infrastructure.

The value proposition shifts from "the smartest model" to "the most reliable, governable agent ecosystem."

Both companies find competitive differentiation in trust and reliability characteristics rather than pure benchmark performance.

Architecture replaces procurement.

Organizations don't choose a model. They choose a runtime strategy — and that choice cascades into data governance, identity architecture, and vendor relationships for years.

The most consequential IT decisions of 2029 are being made in 2026.

In this environment, MSPs and systems integrators become the defining professional services category of the decade. Not because they deploy models, but because they architect the connective tissue between identity, data lineage, agent orchestration, multi-model routing, and cross-cloud optimization. This is the new modernization stack — and the demand for it will far outpace the supply of practitioners who understand it.

What Technology and Finance Leaders Must Decide Now

The organizations that will lead in 2029 are making specific architectural bets today. This is not about predicting which model wins — it's about building infrastructure that remains coherent regardless of which model wins.

Identity, governance, lineage, and observability are the preconditions for agentic AI — not the afterthought. Organizations that have not solved these at scale will find every agentic initiative blocked by the same foundational gaps.

Single-model dependency is a concentration risk that compounds over time. The winning architecture is composable, with model routing as a first-class concern rather than an afterthought.

Where your agents live — and who manages their state — is a long-term infrastructure commitment with switching costs comparable to core banking systems. It deserves the same due diligence.

Discrete projects produce discrete value. Ecosystems compound. The organizations building agentic capability as a platform in 2026 will have structural advantages that point-solution competitors cannot close.

Cloud providers are no longer infrastructure utilities — they are strategic AI operating system partners. Procurement relationships, contract structures, and architectural dependencies need to reflect this shift.

We are entering a decade where AI agents will hold state, make decisions, and operate continuously across every layer of the enterprise. The cloud providers understand this — their partnership structures are designed around it.

The question every CIO, CTO, and technology investor must answer is no longer which model is best.

It's: "Where will our agents live — and who will control their state?"

The organizations that answer that question with clarity and conviction in 2026 will own the architecture of 2029.